In this article

Intel TDX has matured considerably over the past two years, but “production-ready” means different things depending on your workload, team, and risk tolerance. This guide gives IT architects and senior engineers a practical framework for evaluating TDX adoption, including where it performs well, where it adds friction, and when it’s worth waiting for the next hardware generation.

Intel Trust Domain Extensions arrived in production silicon with 4th Gen Xeon Scalable (Sapphire Rapids) in 2023. By the time of this writing, the ecosystem has had two-plus years to mature: Linux kernel support has stabilized, the TDVF (Trust Domain Virtual Firmware) stack has seen meaningful development, and cloud providers and bare metal vendors have begun offering TDX-enabled configurations as standard options rather than special requests.

That maturation matters. Early TDX deployments required significant manual configuration, kernel patching, and attestation infrastructure that wasn’t yet packaged for operational teams. Most of those rough edges have been smoothed. The question for 2026 is no longer “does it work?” but “does it work for my specific workload, at the performance profile I need, with the operational overhead my team can absorb?”

This post addresses that question directly.

What TDX Actually Does (and Doesn’t Do)

Before evaluating TDX for production, it’s worth being precise about what the technology protects and what it doesn’t, because the threat model matters for whether TDX is the right tool.

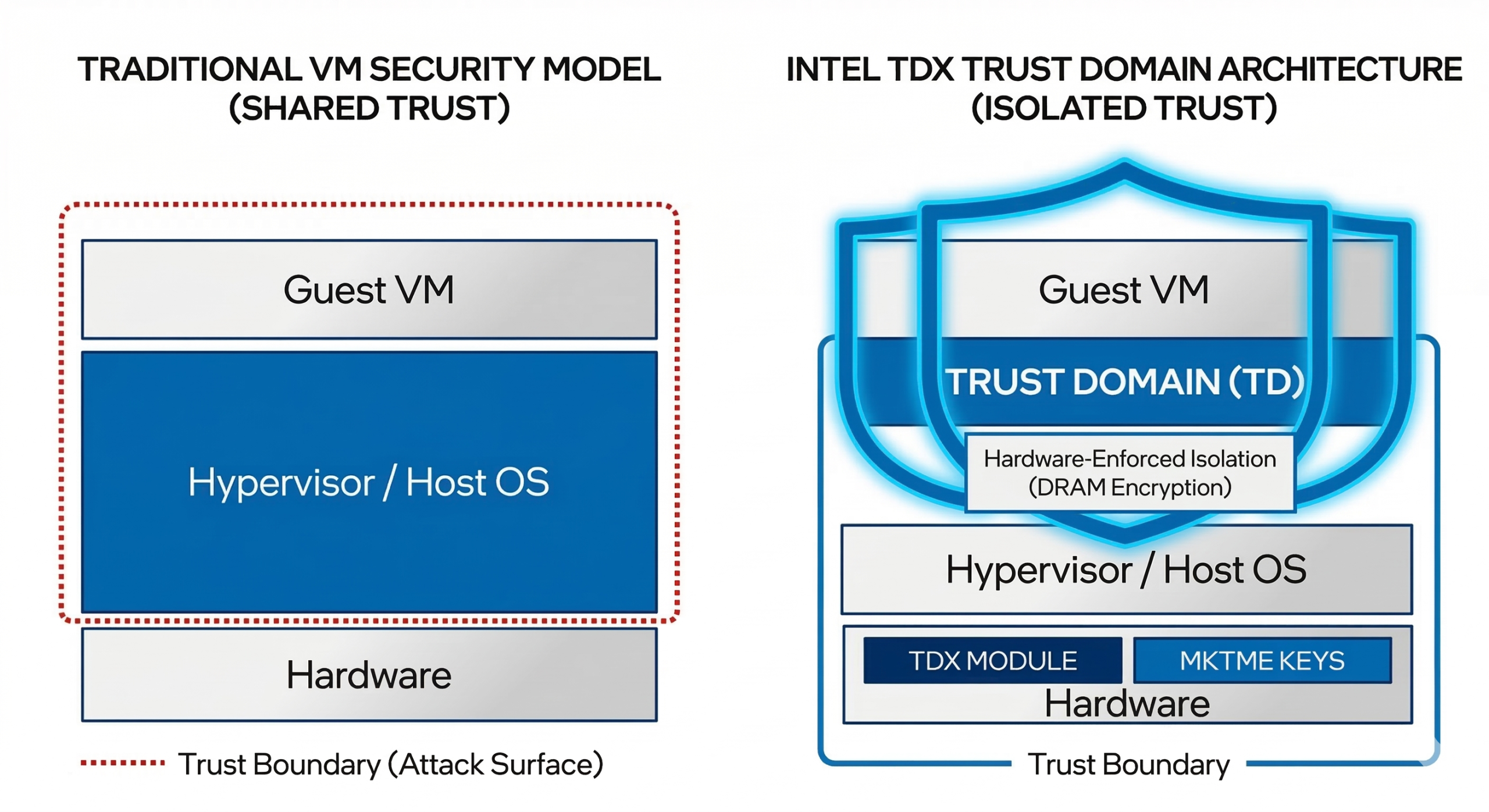

TDX creates hardware-isolated virtual machines called Trust Domains. Memory assigned to a Trust Domain is encrypted using keys managed by the CPU, inaccessible to the hypervisor, host OS, or other VMs on the same physical host. This protects against a specific class of threats: privileged software attacks, including a compromised or malicious hypervisor, and physical memory attacks at the host level.

What TDX does not protect: the workload running inside the Trust Domain from its own vulnerabilities, network-level attacks, side-channel attacks on the CPU itself (a category of ongoing research), or threats originating from within the guest OS. It also doesn’t replace application-level encryption for data in transit or at rest on disk.

The practical framing: TDX is most valuable when your threat model includes the infrastructure operator. That’s relevant for regulated workloads, multi-tenant environments where you don’t fully control the underlying hardware, and scenarios where cryptographic proof of isolation (remote attestation) is a compliance or contractual requirement.

For workloads where the threat model is purely external attackers reaching the application layer, TDX adds cost and complexity without a proportionate security benefit.

Performance Overhead in Real Scenarios

Performance overhead is the question most architecture teams ask first, and it deserves a direct answer rather than a hedge.

The short version:

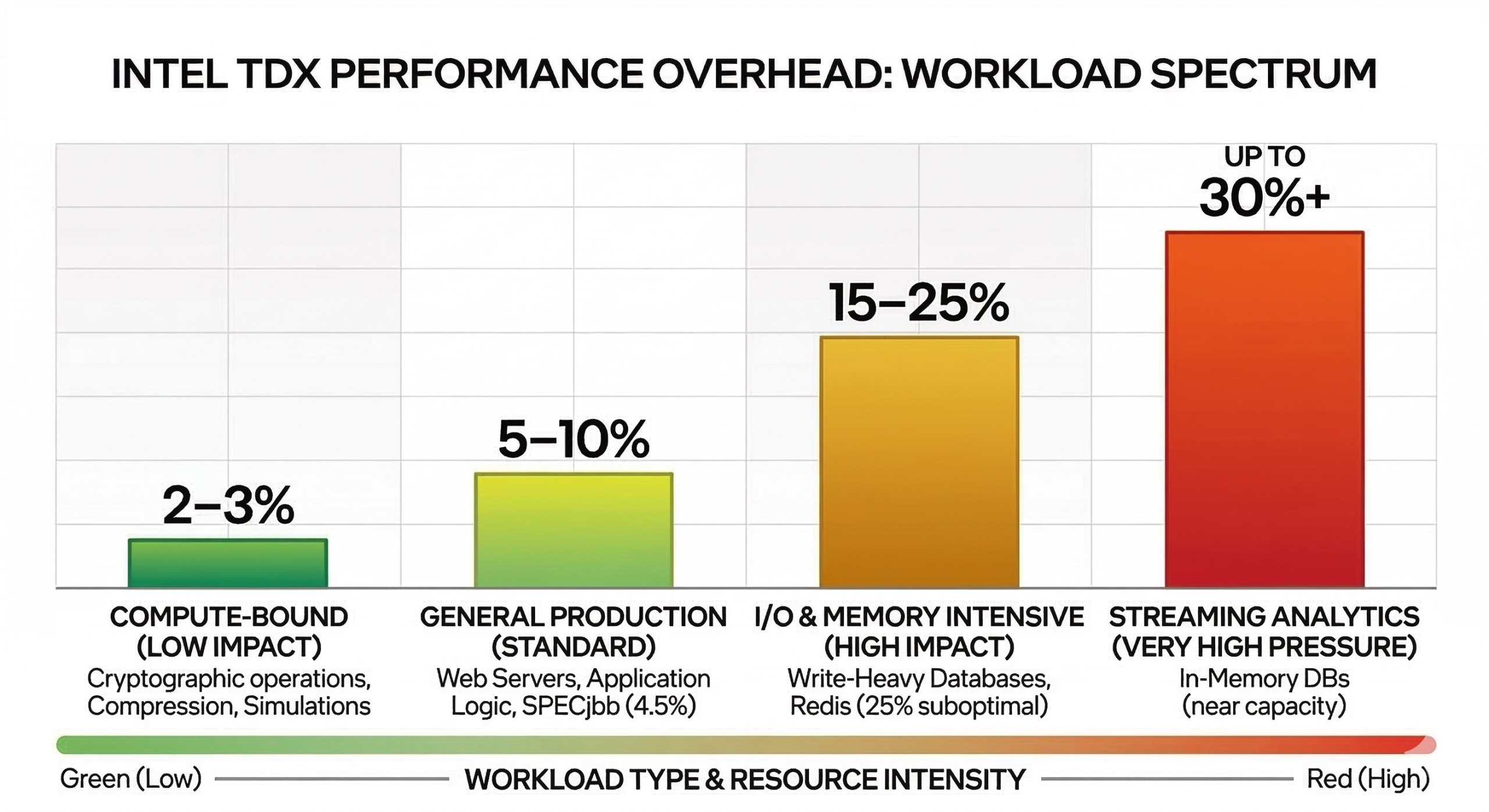

TDX overhead is workload-dependent, generally in the range of 3–10% for most production workloads on 4th and 5th Gen Xeon, and concentrated in specific operations rather than distributed across all compute.

The longer version:

Memory-intensive workloads see the most overhead. TDX encrypts memory using the Multi-Key Total Memory Encryption (MKTME) engine. On workloads with high memory bandwidth utilization like in-memory databases, large working sets, and streaming analytics, you will see measurable throughput reduction compared to non-TDX configurations. According to Intel’s own performance testing on 4th Gen Xeon, CPU and memory-intensive workloads generally see overhead under 5%. SPECrate 2017 integer workloads drop around 3%, and memory-latency-sensitive workloads like SPECjbb up to 4.5%. I/O-intensive workloads tell a different story: Intel’s data shows a range from 3.6% for read-heavy Redis to 25% for write-intensive database operations under suboptimal configuration, with the overhead driven primarily by bounce buffer usage and TDX transition costs. Sources: Intel TDX Performance Considerations and OpenMetal’s TDX benchmark post.

Compute-bound workloads see minimal overhead. CPU-intensive workloads that don’t saturate memory bandwidth like cryptographic operations, compression, and simulation typically show overhead below 3%. In some cases, overhead is within measurement noise.

I/O-bound workloads depend heavily on whether encrypted storage and network paths are layered on top of TDX or handled separately. TDX itself doesn’t encrypt disk I/O; if you’re also using dm-crypt or similar full-disk encryption inside the Trust Domain, you’re stacking overheads that should be measured together.

VM startup and attestation add latency that matters in some architectures. Remote attestation (verifying to a third party that a Trust Domain is running unmodified on genuine TDX hardware) involves a quote generation process that takes tens to hundreds of milliseconds. For long-running services, this is a one-time cost at startup. For ephemeral workloads or environments that spin up Trust Domains frequently, it becomes a factor in overall system latency.

The most accurate way to evaluate overhead for your specific workload is to run your actual application on TDX-enabled hardware before committing to a production architecture. Synthetic benchmarks are useful for establishing baselines, but your workload’s memory access patterns and I/O profile will determine the real number.

Operational Complexity: What You’re Taking On

TDX adds operational surface area. Being honest about this upfront helps teams plan appropriately rather than discovering it mid-deployment.

Kernel and firmware requirements are the first dependency. TDX requires a TDX-aware host kernel (Linux 6.2+ has baseline TDX host support; production deployments generally benefit from newer stable releases), TDX-enabled BIOS/firmware, and a TDX-compatible guest kernel inside the Trust Domain. These requirements are manageable but add specificity to your OS and firmware update process. You can’t treat the host kernel as interchangeable with non-TDX infrastructure.

Attestation infrastructure is required for TDX to deliver its core security value. A Trust Domain without remote attestation is isolated in memory but can’t prove that isolation to external parties. Setting up attestation means either integrating with Intel’s attestation service or running your own attestation verifier. This is doable, but it’s not a trivial integration for teams new to the ecosystem. Intel’s DCAP (Data Center Attestation Primitives) stack has improved significantly, and there are now commercial attestation services that simplify deployment, but it’s still additional infrastructure to operate and monitor.

Debugging is harder inside a Trust Domain. This is a real operational tradeoff. The isolation that protects workloads from hypervisor access also limits some conventional debugging approaches. Core dumps, live memory inspection, and some profiling tools either don’t work or require different approaches inside TDX guests. Teams should plan for this before needing to debug an incident at 2 a.m. on a production workload.

Monitoring requires planning. Standard infrastructure monitoring that relies on hypervisor-level visibility into guest memory or process state won’t work across the Trust Domain boundary. You’ll need to instrument monitoring from inside the guest, which is generally better practice anyway, but requires intentional setup rather than relying on platform defaults.

OpenMetal Hardware Support: v4 and What’s Coming in v5

On OpenMetal’s current v4 hardware, TDX support is available on the XL and XXL tiers. Both configurations run dual Intel Xeon Gold 6530 processors (5th Gen Scalable) with fully populated memory channels, satisfying Intel’s requirements for TDX out of the box. The XXL’s 2TB configuration gives teams additional headroom for memory-intensive Trust Domain workloads, but neither tier requires additional configuration to enable TDX. Our Intel TDX hardware requirements guide covers the specific BIOS configuration and kernel prerequisites for getting started.

v5 hardware is coming. OpenMetal’s next-generation infrastructure, built on Intel Xeon 6 processors, is currently available for pre-order and scheduled to ship in early-to-mid 2026.

The XL v5 (dual Intel Xeon 6530P, 64 cores, 1TB DDR5-6400) has TDX enabled out of the box. The 16x 64GB RDIMM configuration across both sockets meets Intel’s fully populated memory channel requirements without additional configuration.

The Large v5 (dual Intel Xeon 6517P, 32 cores, 512GB DDR5-6400) also supports TDX, though OpenMetal recommends 1TB RAM for optimal TDX configurations. The base 512GB configuration can run TDX workloads, but teams planning memory-intensive Trust Domains should factor the upgraded memory configuration into their planning.

Beyond TDX support, v5 brings DDR5-6400 across all SKUs, a meaningful improvement in memory bandwidth over DDR4 on v4 hardware, along with Micron 7500 MAX NVMe storage, 4x 25 Gbps networking, and TPM included across the full lineup. For workloads where memory bandwidth is a primary concern, the jump to DDR5 will reduce the relative overhead of TDX encryption compared to the same workloads on v4.

When to Adopt TDX Now vs. Wait

This is the question the title of this post promises to answer, so here’s a direct take.

Adopt TDX now if:

Your threat model explicitly includes the infrastructure operator or hypervisor layer. Regulated industries (financial services, healthcare, defense contracting) with specific requirements around data isolation from infrastructure providers are the clearest case. If your compliance framework requires cryptographic proof that workloads run in isolated environments, TDX attestation delivers that in a way that policy controls alone cannot.

Your workload profile is compute-bound or I/O-bound with moderate memory pressure. If your performance analysis shows workloads staying well under memory bandwidth limits, TDX overhead will be minimal and the security benefit comes at low cost.

You’re running confidential multi-party workloads. Analytics on data from multiple parties who can’t share raw data, collaborative AI training on proprietary datasets, or any architecture where cryptographic isolation between tenants is a product requirement rather than a nice-to-have. TDX provides the hardware foundation for these architectures in a way that software isolation cannot.

You’re planning a long-lived production deployment on dedicated hardware. The operational investment in TDX pays off over multi-year deployments where the attestation infrastructure and modified operational tooling amortize across the full deployment lifetime.

Consider waiting if:

Your workload is memory bandwidth-intensive and performance headroom is limited. If you’re running in-memory databases or streaming analytics near capacity, TDX overhead may push you into hardware upgrades you weren’t planning. In this case, v5 hardware with DDR5-6400 is worth waiting for, since the higher baseline bandwidth reduces the relative overhead impact.

Your team has no prior experience with confidential computing. TDX isn’t a switch you flip. The attestation infrastructure, modified debugging practices, and kernel/firmware requirements represent a real learning curve. Unless there’s a specific compliance driver creating urgency, a structured proof-of-concept before a production commitment is the right approach.

Your threat model doesn’t include privileged software attacks. If your security concerns center on application vulnerabilities, network attacks, or endpoint compromise, TDX doesn’t address those surfaces. The investment would be better directed elsewhere.

The timing calculus changes somewhat with v5 hardware arriving in mid-2026. If you’re currently on v4 and evaluating TDX for a workload that’s sensitive to memory bandwidth, waiting for v5 DDR5 hardware may be worth the short delay. If you have a compliance requirement driving an immediate timeline, the XXL v4 is a proven configuration that works today.

Practical Starting Point

If you’re moving from evaluation to a proof-of-concept, the OpenMetal deployment guide for confidential computing workloads covers the concrete steps for standing up TDX on OpenMetal infrastructure. The TDX performance benchmarks post has workload-specific overhead data for blockchain and AI use cases that may be relevant depending on your application profile.

For teams earlier in the evaluation, perhaps still working through the threat model or building the internal case for confidential computing investment, the confidential computing infrastructure overview is a useful starting point, and the hardware details page covers the specific TDX configuration on OpenMetal servers.

And of course if you have any questions about whether TDX is right for your needs, or you’d like to explore compatible hardware options, let us know!

Schedule a Consultation

Get a deeper assessment and discuss your unique requirements.

Read More on the OpenMetal Blog