Q: How do OpenMetal storage servers connect to compute nodes?

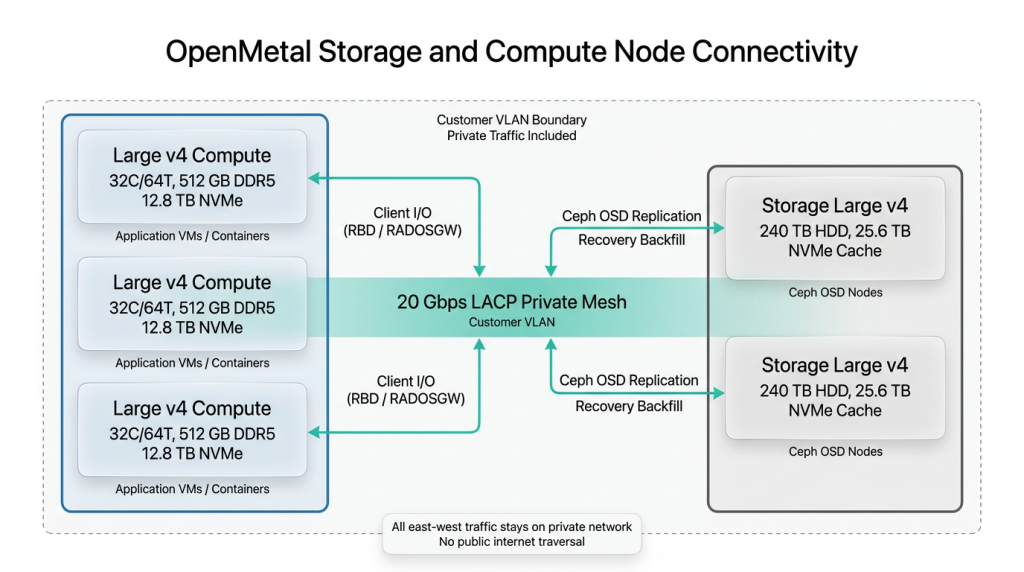

Storage servers connect to compute nodes over the same 20 Gbps LACP-bonded private mesh that links all OpenMetal bare metal servers, using customer-specific VLANs with all private traffic included at no additional cost.

Each Storage Large v4 has dual 10 Gbps NICs bonded via LACP for 20 Gbps aggregate private bandwidth. This link carries all Ceph traffic: OSD replication between storage nodes, client I/O from compute nodes requesting block (RBD) or object (RADOSGW) storage, and recovery backfill during drive or node replacement. The 20 Gbps bandwidth is sized to handle Ceph rebalancing operations, which can temporarily saturate the link, without starving application I/O from compute nodes.

Compute nodes (Large v4, XL v4, XXL v4) use the same LACP-bonded private mesh. All nodes in a deployment share customer-specific VLANs, so east-west traffic between compute and storage stays on the private network and never traverses the public internet. No additional network configuration is required to connect storage nodes to an existing bare metal compute deployment. OpenMetal’s onboarding team helps design cluster topology, including VLAN segmentation for separating Ceph replication traffic from application traffic when performance isolation is needed.

Related Answers

- OpenMetal Large v3 vs Large v4 Comparison

- Kubernetes on OpenMetal Bare Metal Servers

- OpenMetal Large v4 NVMe Drive Specs

Interesting Articles

Interested in OpenMetal Products?

Schedule a Consultation

Get a deeper assessment and discuss your unique requirements.